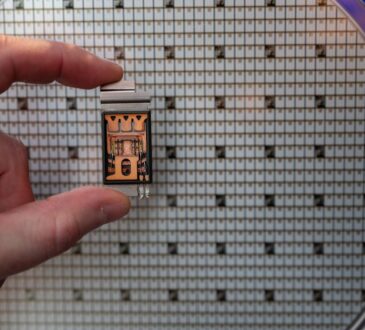

The New York Stock Exchange on Wednesday. Today’s autonomous AI technologies make it much cheaper and easier for cybercriminals to attempt market manipulation.Richard Drew/The Associated Press

Chris Gay is a contributing columnist for The Globe and Mail. He is a former Wall Street Journal staffer and writes the newsletter Figure at Center.

Bad actors have sought to manipulate markets since markets first emerged. We all know the tricks of the trade: insider trading, pump-and-dump schemes, front-running. For centuries, these were the chicaneries of mortal actors limited by the constraints of human ingenuity. Now they are the province of seemingly omniscient robots that may be impossible to restrain.

Does that spell the end of trust in equity markets?

It’s worth noting that malefactors abetted by technology smarter and faster than mere mortals have been manipulating markets for years. Rogue high-speed trading was blamed for the notorious “flash crash” of 2010. (The rogue was prosecuted and convicted.)

Among the fraudster’s favourite techniques are “pinging” and “spoofing.” The former is a type of front-running that involves submitting large volumes of small orders for a security, then cancelling them within nanoseconds, inducing other machines to react and reveal their trading intentions. That provides the pinger valuable counterparty information at scant risk, since the orders go unexecuted.

Spoofing uses artificial intelligence to place fraudulent orders that bait other AI systems to move prices to the benefit of the spoofer. Spoofing and pinging, though illegal in the United States and other countries, continue nonetheless.

Opinion: The future of AI will be decided by trust, not speed

While these techniques are old, combined with the relentless advance of AI, they and other illicit tactics pose a whole new level of risk. Think of AI as a cybercrime force multiplier.

“With AI, bad actors and rogue nations can readily and cheaply engage in market manipulation, financial misinformation, and regulatory misconduct that threaten the stability, integrity, and security of our financial system like never before,” Temple University law professor Tom C. W. Lin wrote in a paper published last year. “The intelligence behind this new technology may be artificial, but the damage and losses in market value and confidence are very real.”

Today’s autonomous AI technologies, including malignant tools such as the dark web-based FraudGPT, designed to create fake content for fraud and cyberattacks, make it much cheaper and easier for cybercriminals to attempt market manipulation. That, Prof. Lin wrote, “creates new, intertwined, and systemic risks related to speed and opacity; exacerbates ongoing geopolitical threats; and increases the likelihood of major financial accidents.”

Among the more pernicious AI tactics are financial deepfakes. We don’t have to speculate about the consequences; they’ve already happened. Three years ago, a deepfake photo of an explosion near the Pentagon briefly knocked US$500-billion off the S&P 500, a dip some devious instigator could have exploited.

Bad actors could fake the launch of game-changing products and profit on the momentary stock-price spike. They could likewise deepfake an executive in some compromising situation and reap illicit profits on the dip or the rebound.

Enough fakery of that order can produce “truth decay” that erodes essential confidence, especially among meme-driven retail traders who play an increasingly significant role in the marketplace. This in turn allows actual malefactors, particularly state actors, to convince a jaded public that accusations against them are just fake news. The benefit of the doubt is what some call a “liar’s dividend.” (The opposite is what Prof. Lin calls a “truth teller’s tax” – the resources a defamed party must expend to dispel a lie.)

Two other facets of AI could abet criminals and generate systemic risk: financial velocities that are “too fast to stop” and proprietary algorithms that Prof. Lin terms “too opaque to understand.” This innate opacity also complicates prosecution of cybercriminals.

Stigmatize the market sufficiently, and rational investors would no more trust it than they’d trust a car without brakes. Withdrawal from the market and cash hoarding could ensue.

More job seekers are using AI to craft resumes. It’s slowing the hiring process

Lawmakers are not helpless here. Europe is implementing its EU AI Act, which outlaws various forms of AI fraud, and several U.S. states are taking separate measures. The chief U.S. securities regulator last year created a Cyber and Emerging Technologies Unit to protect retail investors. Canada has its own guidelines.

Prof. Lin suggests bolstering incentives for better risk management and penalties for AI-related misconduct, as well as encouraging passive long-term investments, especially for retail investors. The International Monetary Fund wants better calibration of “circuit breakers” (automatic trading halts to subdue a panic) and enhanced monitoring of large traders employing AI, including non-bank firms.

But given the speed with which AI evolves, overwhelmed and underresourced regulators will always be playing catch-up, and rules could become obsolete as soon as they’re implemented. And, as usual in American politics especially, powerful corporate interests will pull out every stop to nix regulations they don’t like.

No one can know for sure what AI has in store for the market, but one thing seems certain: Whatever it can manipulate, it will manipulate.